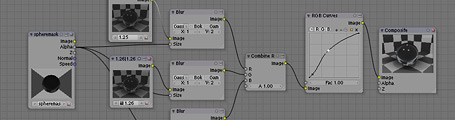

Here’s another comp test in some of my experiments with cekuhnen in the blenderartists forums. It makes use of a simple Combine RGBA node, which I wrote the other day. It doesn’t exist in Blender but a patch is available in the tracker. Without that patch though, you can replicate the functionality using a slightly convoluted node network. This technique basically takes three different versions of the refracting material, with slightly different indexes of refraction, then adds them back together in the different R/G/B channels. I’ve blurred them slightly with a mask taken from the sphere on another render layer.

It doesn’t hold up too well in close up since you can see the three different layers quite sharply, rather than a smooth blend – for that you’d need more IOR layers. I also tried doing this in material nodes too, which would be a much better approach, however the way raytraced refractions is handled there seems to be a little bit weird, and it didn’t work out. Hopefully in the future this can be cleaned up, perhaps with specific raytracing nodes that output RGB or alpha or whatever.

I saw your conversation, concerning refractions and renderlayers, i was experimnting, and an object in 1 layer shower on a renderlayer of a different layer, through the refraction of the refractionary object, u can see stuff on all (even unenabled) layers.